When an AI Shopping Agent Makes a Bad Purchase, Who Actually Owns the Mistake?

Agentic commerce will not scale on recommendation quality alone. It will scale when shoppers, merchants, platforms, and payment intermediaries understand who owns the downside when an autonomous purchase goes wrong.

Most of the discussion around AI shopping focuses on recommendation quality. Can the model find the right product? Can it negotiate trade-offs? Can it personalize the shortlist? Those questions matter, but they are not the true gating factor for autonomous buying.

The harder question is what happens when the agent gets it wrong.

Thesis

Liability design, not just model intelligence, may be the real bottleneck for autonomous shopping. Until the ecosystem can explain who owns the downside, trust will lag behind technical progress.

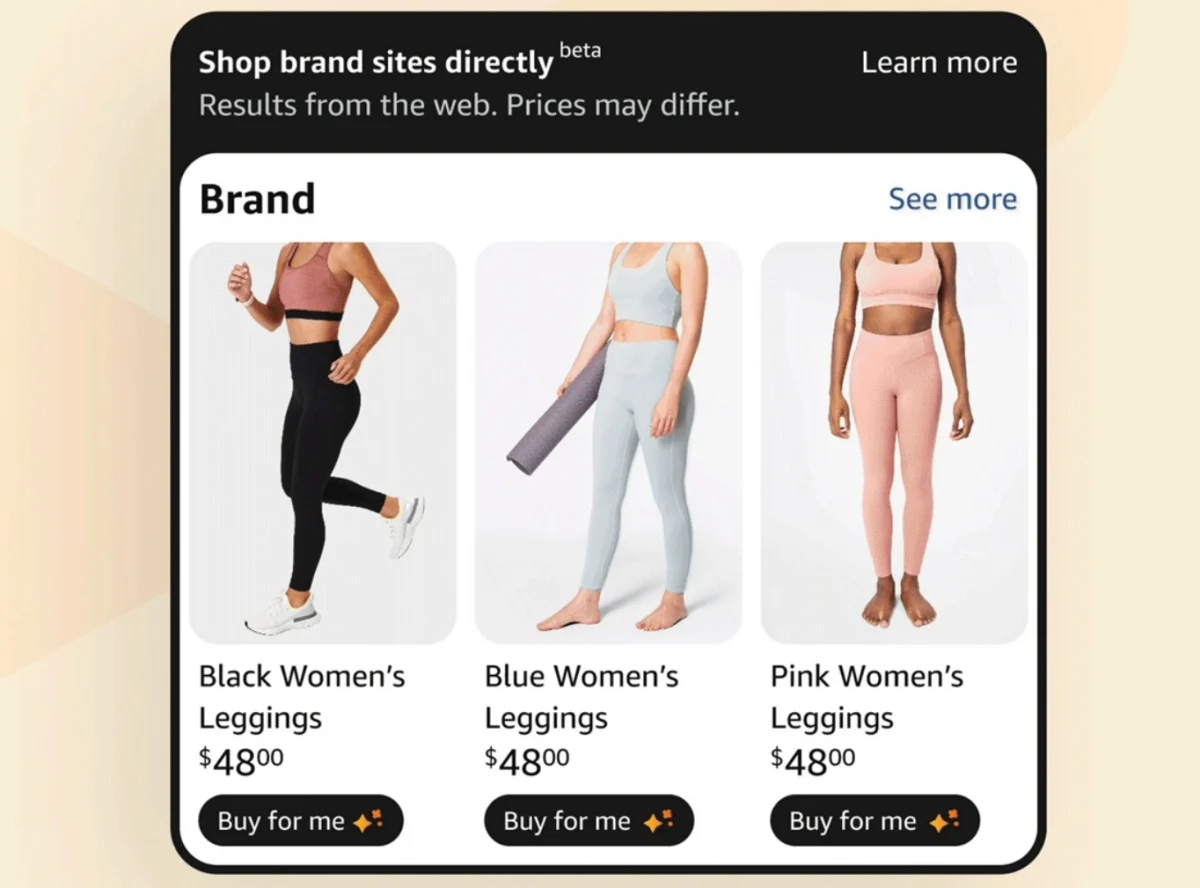

Assistant-led shopping is not the same as agent-executed purchasing

There is an enormous difference between an assistant that helps a shopper compare options and an agent that can actually commit money, trigger a subscription, or select a merchant. In the first case, the human still owns the final click. In the second, the system moves inside the transaction layer.

That shift changes the user's expectation. Once software is allowed to act, people stop judging it like a search engine and start judging it like a financial delegate. That means the wrong outcome is no longer a bad recommendation. It becomes a disputed purchase, a painful return, a chargeback, or a trust failure.

Why liability is strategic, not a legal footnote

Retail executives often treat liability as a terms-of-service issue for counsel to handle later. That is a mistake. Liability determines adoption because it shapes whether shoppers feel safe delegating intent. If users believe they will be left alone to unwind bad purchases, they will keep demanding a human confirmation layer even as the UX improves.

| Failure | Immediate pain | Strategic consequence |

|---|---|---|

| Wrong item | Return friction and support cost | Lower user trust in delegation |

| Wrong merchant | Fraud, fulfillment issues, counterfeit risk | Platform and marketplace distrust |

| Wrong price or renewal | Refunds, disputes, chargebacks | Higher transaction risk and regulatory attention |

Where responsibility could sit

Responsibility can land in at least five places: the user, the merchant, the platform, the payment provider, or the agent operator. That ambiguity is the problem. Every actor in the chain has an incentive to say the human authorized the outcome somewhere in the flow, even when that consent does not match the user's lived expectation.

- User: easiest legal fallback, weakest trust model.

- Retailer: often carries the visible customer-service burden because the order landed on its books.

- Platform or marketplace: may control recommendation logic and ranking but disclaim transactional responsibility.

- Payment provider: owns risk controls and dispute rules, but not product selection.

- Agent operator: may shape the decision path, but often hides behind interface or software disclaimers.

Risk concentrates at checkout and post-purchase

The liability debate intensifies at the moment of payment because checkout is where recommendation turns into obligation. Merchant-of-record structures, delegated credentials, return logic, and card-network dispute rules suddenly matter more than model outputs. That is also why chargebacks and refunds will become strategic data points in agentic commerce, not just operations metrics.

If the merchant is the face of the transaction but not the source of the decision, brands inherit downside without owning the intent. That is not a stable equilibrium.

Fine print will not solve the expectation gap

Terms of service can disclaim plenty. They cannot erase what the shopper believes happened. If an AI agent presents itself as a helpful delegate and then makes an obviously wrong call, users will not accept "you technically authorized this somewhere in settings" as a satisfying answer. The commerce stack will have to align legal consent with intuitive consent.

What brands should do now

- Clarify delegated checkout boundaries. Make it explicit when an agent is assisting versus acting.

- Publish machine-readable return and cancellation rules. The easier those are to retrieve, the lower the post-purchase ambiguity.

- Track agent-originated disputes separately. This will become an important signal for platform and payment negotiations.

- Pressure partners on accountability. If a platform wants agentic distribution, ask who absorbs fraud, subscription mistakes, and wrong-merchant outcomes.

Agentic commerce will feel mature when the ecosystem can answer a simple question in plain English: if the AI buys the wrong thing, who fixes it? Until then, the liability layer will keep adoption slower than the demo layer suggests.

Frequently Asked Questions

Why is liability such a big issue for AI shopping?

Because delegated shopping turns recommendation errors into monetary outcomes. That changes the trust model for users and the risk model for merchants.

Can terms of service solve this?

No. Fine print can shape legal posture, but it does not remove the expectation that a delegated purchase should be reversible and accountable.

Who is most likely to absorb liability in early agentic commerce?

Retailers, because they are the merchant of record and the first point of contact for returns. But that creates an imbalance when the agent — not the merchant — drove the purchase decision.

How does this affect chargebacks and payment disputes?

Agent-originated purchases may increase chargeback rates if shoppers dispute transactions they feel they did not fully authorize. Payment networks will need new rules to distinguish agent-delegated purchases from traditional card-present or card-not-present transactions.

What should brands track now to prepare?

Separate agent-originated orders in your analytics. Track return rates, dispute rates, and customer satisfaction scores for AI-referred purchases independently from other channels.

Will regulation eventually clarify AI shopping liability?

Likely, but not quickly. Consumer protection frameworks were built for human buyers. Adapting them to delegated AI purchasing will require new legal categories for consent, authorization, and accountability that do not yet exist.